Google recently introduced its latest AI innovation, Google Lumiere, a groundbreaking text-to-video creator.

The technology, showcased through a paper and demo over the weekend, employs a novel diffusion model for video production, enabling the generation of short video clips seamlessly in a single process, distinguishing itself from existing generators that piece together static frames.

Google Lumiere and Space-Time Diffusion for Video Generation

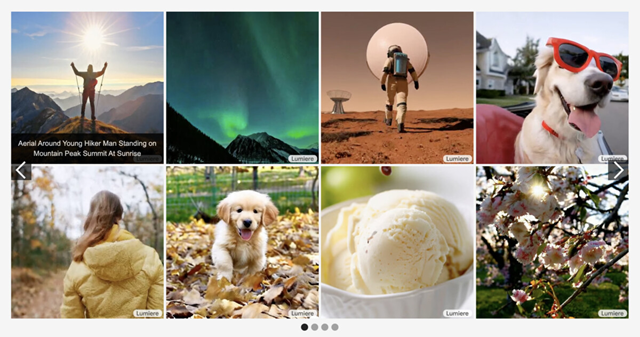

Google Lumiere's preview clips exhibit remarkable results, highlighting the tangible progress in AI video generation. The product utilizes the Space-Time-U-Net (STUNet) diffusion model, which, as described in the accompanying research paper, crafts short video clips in a single step.

The STUNet model operates by generating a foundational frame based on the provided text prompt, forecasting the movement and location of objects within the frame, and producing a full-frame-rate video clip in a single pass. The approach integrates previously trained diffusion models for text-to-image generation, resulting in “realistic, diverse, and coherent motion.”

This marks a departure from existing AI video generators like Runway Gen 2 or Stable Video Diffusion, which generate multiple static frames and assemble them using temporal super-resolution.

Beyond text-to-video capabilities, Lumiere boasts image-to-video generation, stylized video creation (crafting videos in a specific style), cinemagraphs (animating a single element in an otherwise static image), and video inpainting, allowing selective AI video editing of specific areas within a frame, such as altering the color of an element.

AI Video Generation is Here, or Almost Here

While Lumiere is not yet available to the public, its unveiling underscores the imminent realization of text-to-video capabilities. While not entirely lifelike, the preview reels created with Lumiere appear more authentic compared to existing tools like Meta's Emu or Stable Video Diffusion.

In addition to revolutionizing video synthesis, Google acknowledges in its paper the potential for Lumiere to generate harmful or misleading content. They emphasize the need for safeguards to counteract bias that could lead to malicious uses or outcomes, although specific details on implementation remain undisclosed.

One certainty is that with the exploration of several AI video generators and the debut of Google Lumiere, we are progressively approaching the era where text-to-video generators will be readily accessible.

What are your thoughts on Google Lumiere? Are you eager to give it a try?